Cyber Threat Intelligence (CTI) is critical for identifying and mitigating cyber threats. However, malicious actors use disinformation (deliberately false information) and manipulation tactics to compromise CTI systems, mislead analysts, and disrupt defense mechanisms. Understanding these risks is essential for improving the integrity of CTI operations.

Tactics of Disinformation and Manipulation in CTI

Disinformation Techniques

- False Indicators of Compromise (IOCs): Misleading data to mask attackers or misdirect investigations.

- Fake Attribution: Assigning responsibility to incorrect groups, often to stir geopolitical tensions.

- Misinformation Campaigns: Spreading rumors or inaccurate analysis to erode trust in legitimate intelligence.

Manipulation Methods

- CTI Feed Tampering: Compromising intelligence feeds to insert misleading information.

- Data Poisoning: Infiltrating machine-learning systems to reduce detection accuracy.

- Noise Amplification: Overloading CTI platforms with irrelevant data to obscure real threats.

Impacts of Disinformation and Manipulation

- Erosion of Trust: Organizations may lose confidence in CTI platforms and providers.

- Resource Wastage: Responding to false alarms or investigating fabricated threats diverts resources from real issues.

- Disruption of Collaboration: Shared intelligence networks are less effective when disinformation spreads.

Case Studies:

- 2016 U.S. Election Interference: Cyber campaigns amplified by disinformation on social media platforms.

- False Flag Operations: Advanced Persistent Threat (APT) groups framing others to avoid detection and mislead investigations.

Defensive Strategies

- Source Verification: Cross-check intelligence with multiple trusted sources to ensure accuracy.

- Automated Validation Tools: Use AI/ML systems to detect anomalies in threat data.

- Collaborative Intelligence Sharing: Engage in trusted forums like ISACs to validate information.

- Awareness Training: Educate teams to identify and mitigate disinformation tactics.

Disinformation in financial systems refers to the intentional spread of false information to influence market behaviors, destabilize economies, or achieve strategic objectives. Manipulation involves deliberate actions, such as exploiting market inefficiencies or leveraging misinformation for financial gain or geopolitical advantage. Both tactics threaten financial stability and investor trust.

Key Example

- Market Manipulation: Dissemination of false reports about corporate performance or economic policies to influence stock or currency prices.

- Social Media Campaigns: Coordinated misinformation, such as orchestrated short squeezes or false cryptocurrency announcements (e.g., pump-and-dump schemes).

- Algorithmic Manipulation: High-frequency trading (HFT) and spoofing create phantom liquidity, exacerbating market volatility.

- State-Sponsored Disinformation: Countries engage in economic warfare by spreading propaganda targeting rival economies or currencies.

Impacts

- Market Instability: Loss of investor confidence, rapid devaluation of assets, and liquidity crises.

- Economic Destabilization: Systemic risks to financial institutions and markets, exacerbating economic downturns.

- National Security Risks: Disinformation campaigns can undermine sovereign debt markets or currency stability.

Defense Mechanisms

- Regulation and Oversight:

- Securities laws such as the U.S. SEC rules and the EU’s Market Abuse Regulation (MAR) combat disinformation and manipulation.

- Technological Countermeasures:

- AI-driven monitoring systems detect and flag anomalies in trading and online narratives.

- Blockchain technology enhances transparency in financial transactions.

- Public Awareness:

- Education initiatives promote media literacy and awareness of financial scams.

- Corporate Defenses:

- Rapid response teams address misinformation, while enhanced cybersecurity prevents breaches.

Emerging Threats

- Deepfake Technology: AI-generated media could simulate executives’ voices or videos to spread false financial announcements.

- Cryptocurrency Manipulation: Decentralized finance (DeFi) platforms are particularly vulnerable to misinformation and manipulation.

- Hybrid Warfare: State-sponsored campaigns target financial systems as a component of broader geopolitical conflicts.

The healthcare and pharmaceutical industries are critical to maintaining global public health, yet they are frequently plagued by disinformation and unethical practices. These challenges undermine public trust, reduce the effectiveness of health interventions, and disproportionately affect vulnerable populations. This paper examines key examples of disinformation and manipulation, their sources, and the resultant impact on society.

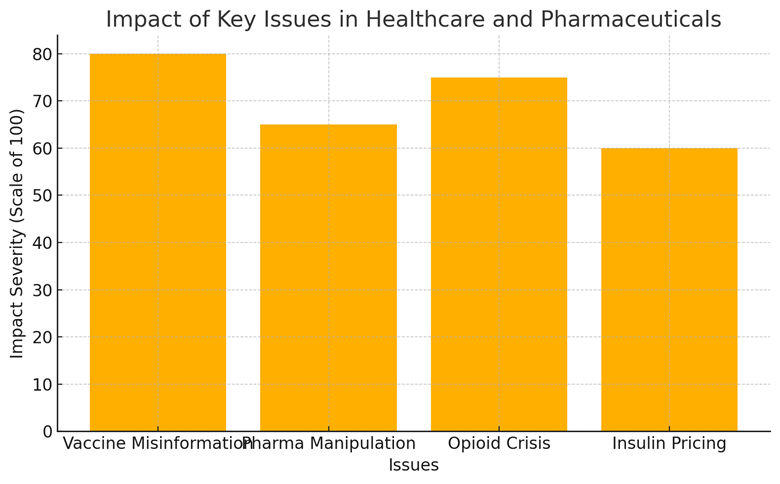

A variety of issues dominate the landscape of disinformation and manipulation, including vaccine misinformation, unethical pharmaceutical practices, the opioid crisis, and insulin pricing. The bar chart below illustrates the relative impact of these issues on public health, scored on a scale of 100 based on severity.

Impact of Key Issues in Healthcare and Pharmaceuticals

The findings highlight the multifaceted nature of disinformation and manipulation within healthcare. Vaccine misinformation has been linked to outbreaks of preventable diseases, while unethical pharmaceutical practices contribute to rising healthcare costs and public distrust. The rise in manipulation cases over time suggests a need for stricter regulatory oversight and public accountability. Social media’s dominant role as a misinformation source calls for targeted interventions to promote health literacy and credible content dissemination.

Critical infrastructure, encompassing sectors such as energy, healthcare, transportation, and communication, is increasingly vulnerable to disinformation and manipulation. These tactics exploit societal, technical, and operational vulnerabilities to disrupt essential systems and erode public trust.

Key Areas of Impact

Public Trust and Perception

- Erosion of Trust: Disinformation undermines confidence in infrastructure reliability (e.g., vaccine logistics during COVID-19).

- Panic Inducement: False narratives about outages or contamination create unnecessary fear, such as during natural disasters.

Cyber Manipulation

- False Alarms: Cyber attackers use fake warnings or ransom claims to amplify impact.

- Operational Data Alteration: Manipulated data misleads operators, causing potentially harmful decisions (e.g., water treatment settings).

Election Infrastructure

- Confidence Undermining: False claims of voter fraud compromise trust in results.

- Voter Suppression: Disinformation about polling logistics reduces turnout.

Energy Sector Disinformation

- Pipeline Attacks: Panic induced by misinformation, such as during the 2021 Colonial Pipeline incident.

- Climate Narratives: Misinformation about energy sources hampers critical advancements.

Social Engineering

- Phishing and Influence Campaigns: Manipulating insiders through targeted communication.

Tactics and Tools

- Bots and Trolls: Amplify disinformation on social platforms.

- Deepfakes: Convincing yet false content targeting stakeholders.

- Fake News Websites: Dissemination of fabricated reports.

- Data Breach Exploitation: Leaked or altered data misused to incite distrust.

Case Studies

- 2016 U.S. Election: Disinformation campaigns targeted election systems, fostering mistrust.

- Ukraine Power Grid Attacks (2015–2016): Cyber and misinformation tactics destabilized public confidence.

- Florida Water Treatment Plant Incident (2021): Manipulated parameters paired with misinformation caused concern despite limited direct impact.

Mitigation Strategies

- Public Awareness: Educating citizens to verify sources and identify disinformation.

- Rapid Response Protocols: Addressing misinformation in real time.

- Technological Solutions: AI-driven detection of fake content, bots, and deepfakes.

- Collaborative Efforts: Coordination between governments, private sectors, and international bodies.